· 8 min read

- Product Manager at SAP

- AI Platform Architect at SAP

Artificial Intelligence (AI) has become essential for business innovation, enabling companies to unlock new revenue streams, automate processes, and make data-driven decisions automatically and at scale.

There is industry-wide agreement that Kubernetes provides an ideal platform for running AI workloads (see Cloud Native AI Whitepaper). Furthermore, the CNCF community is in the process of defining infrastructure level AI Conformance which will make Kubernetes ubiquitous for AI workloads.

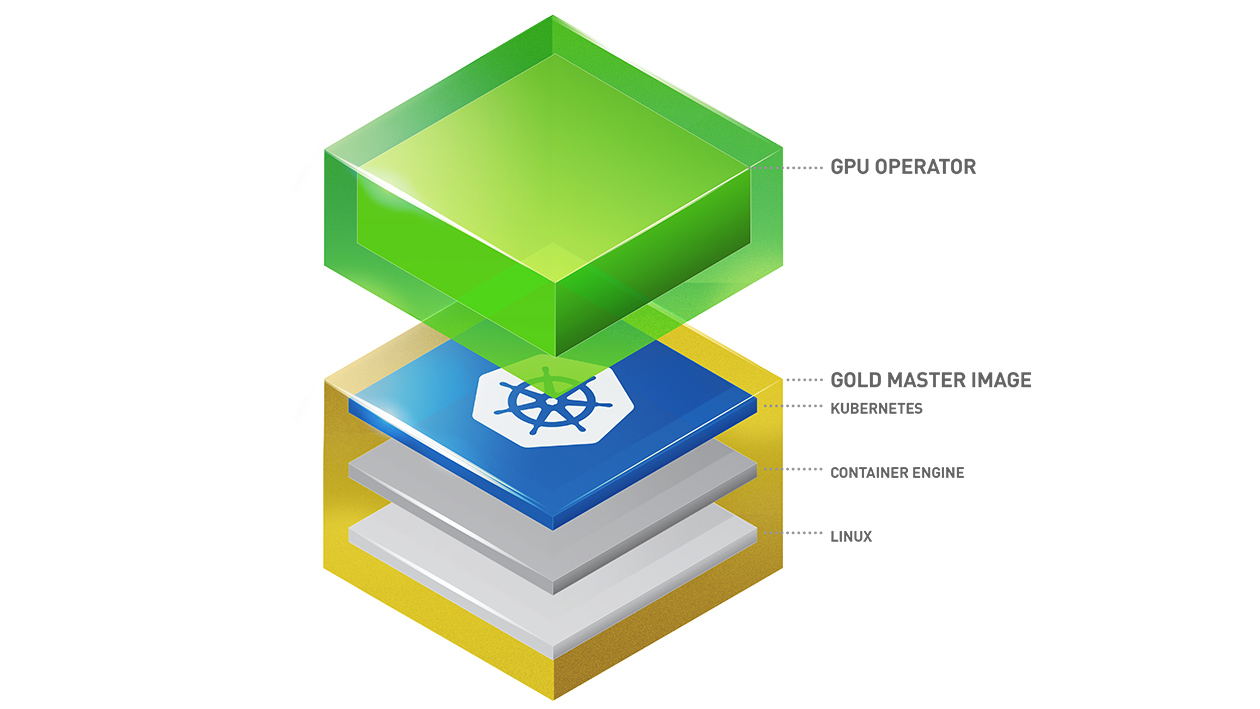

But for Kubernetes to support GPUs, you need the worker nodes' operating systems enabled with the right GPU drivers and associated access frameworks.

It may seem like just an obvious, pragmatic, and necessary requirement at the infrastructure level, but embedded in the fully open-source Apeiro Reference Architecture, governed and supported by (industry) members of the NeoNephos Foundation, its impact is substantial: Apeiro freely empowers any organization or consortia seeking to build sovereign, modern datacenters for leveraging AI.

Participation and contributions are not only welcome, but directly connect to the broader joint AI imperative of business.

Easier said than done, there is significant operational complexity to consider: multi-cloud, hybrid environments, different hardware, diverse operating systems, complex driver management, and varying cloud provider configurations.

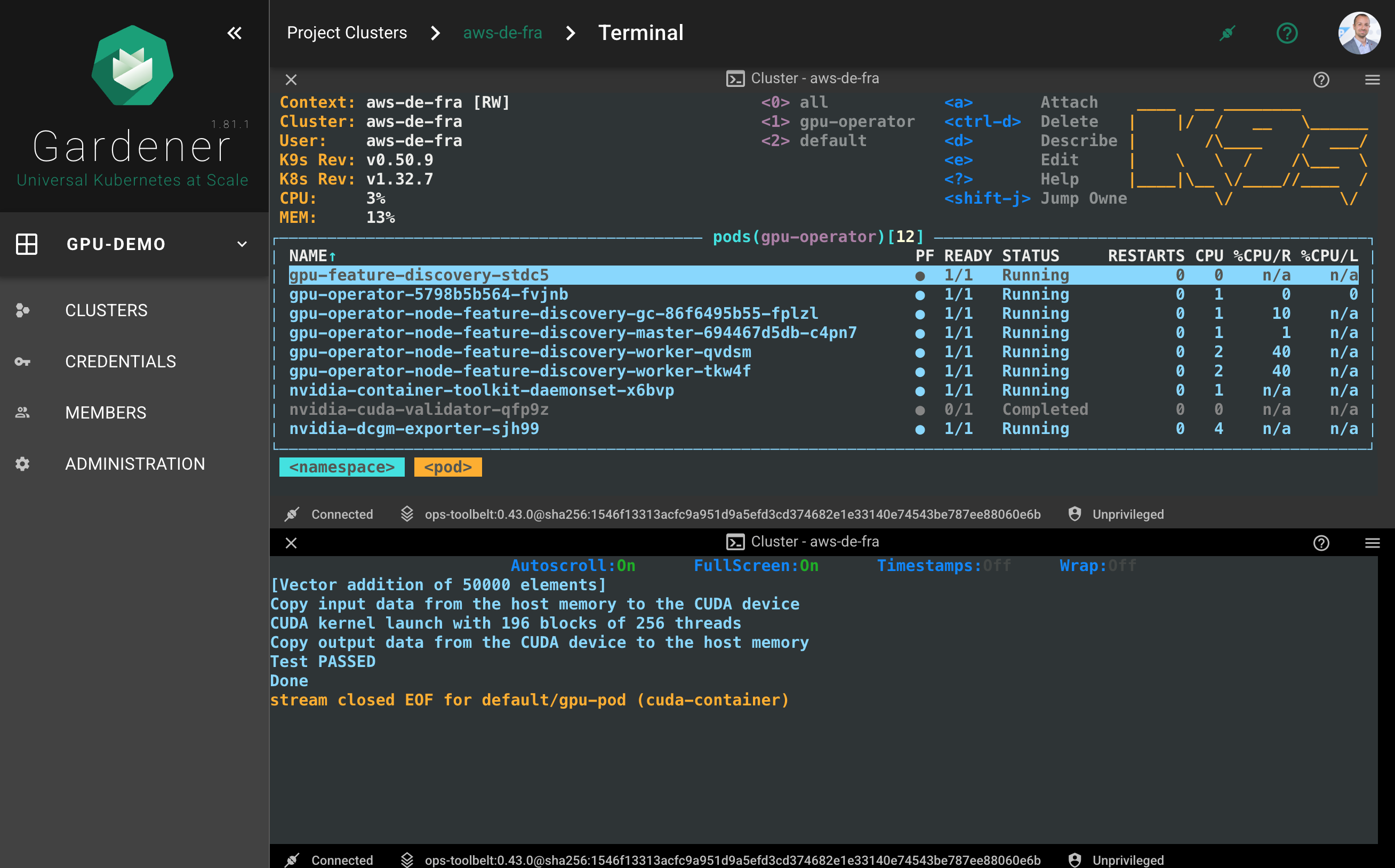

In Apeiro, we offer Gardener and Garden Linux to tackle such operational complexity. With the NVIDIA GPU Operator, we can provide a unified AI-conformant Kubernetes platform that works across any infrastructure with NVIDIA Data Center GPUs.

The NVIDIA GPU Operator automates GPU support in Kubernetes by deploying all the required software components (drivers, CUDA, device plugins, etc.) in the right ABI-compatible versions. It eliminates any manual GPU driver installation and configuration, and enables GPUs as native Kubernetes resources. The NVIDIA GPU Operator is a Kubernetes-native operator with custom resource definitions. Furthermore, it ensures consistent GPU functionality across different hardware nodes and configurations, while enabling automatic updates, scaling, and troubleshooting through standard Kubernetes APIs.

The NVIDIA GPU Operator is architected in a modular way so anyone who wants to build GPU Driver containers can make the NVIDIA GPU Operator work with their operating system. This is what we have done and we are making it publicly available. We used the public NVIDIA GPU Driver Dockerfile to create functional Garden Linux GPU Driver images. Please feel free to use them and collaborate by sharing feedback within the Garden Linux gardenlinux-nvidia-installer repository.

Garden Linux builds containers for the three latest active NVIDIA driver branches on all Garden Linux versions that are in maintenance.

As of August 2025, this means containerized GPU drivers for the following combinations of major releases are available:

| Garden Linux | NVIDIA Driver |

|---|---|

| 1592 | 570, 565, 550 |

| 1877 | 570, 565, 550 |

We automated the support directly in our build pipelines.

With guidance from NVIDIA[1], Garden Linux's build and release process was adjusted to automatically publish the ABI-compatible container images required by the NVIDIA GPU Operator.

An automated workflow immediately creates a pull request for new driver versions. Hence, Garden Linux provides you with the latest GPU driver updates with zero effort! The results are published in Garden Linux's GitHub container registry ghcr.io/gardenlinux/gardenlinux-nvidia-installer with the release workflow.

Orchestrating the publishing of the drivers, wrapped in the correct container format needed by the NVIDIA GPU Operator, requires two major steps:

The new driver is compiled against the specific container-based environment and the exact Linux Kernel version used in Garden Linux.

After Step 1 is successfully completed, the new driver is compatibly packaged as OCI container, which can be easily picked up by the NVIDIA GPU Operator at runtime (cf. "nvidia-driver" entry point).

The NVIDIA GPU Operator is installed using a Helm Chart provided in the NVIDIA Helm repository. Running the NVIDIA GPU Operator on Garden Linux requires a specific set of configuration values in gpu-operator-values.yaml.

For sovereign (and air-gapped) environments, you need to maintain your own repository correctly in the driver.repository value of the Helm chart.

The example below assumes you have:

Create Kubernetes cluster.

You can use any (and different) worker nodes with NVIDIA GPUs.

Install Helm

Follow the NVIDIA GPU Driver Getting Started Operator Installation Guide to prepare Helm.

It is important to add the NVIDIA Helm repository before proceeding to next step.

Install the NVIDIA GPU Operator

You can further follow the guide from Step 2 or use the example from the Garden Linux NVIDIA Installer. It is important to:

make sure the gpu-operator namespace exists before installation or if you execute the command below consider adding the Helm flag --create-namespace as alternative.

use Helm flag --values with value https://raw.githubusercontent.com/gardenlinux/gardenlinux-nvidia-installer/refs/heads/main/helm/gpu-operator-values.yaml as demonstrated below.

helm upgrade --install -n gpu-operator --create-namespace gpu-operator nvidia/gpu-operator --values \

https://raw.githubusercontent.com/gardenlinux/gardenlinux-nvidia-installer/refs/heads/main/helm/gpu-operator-values.yamlBy default you can use the latest supported version with the values file above, but if you really need it, you can change the driver.version property to any available version available in Garden Linux NVIDIA Driver Package Repository.

Test GPU availability (optional)

You can verify that NVIDIA GPU Operator has worked correctly using a sample job from the NVIDIA k8s-device-plugin repository. Deploy the following GPU pod manifest:

apiVersion: v1

kind: Pod

metadata:

name: gpu-pod

spec:

restartPolicy: Never

containers:

- name: cuda-container

image: nvcr.io/nvidia/k8s/cuda-sample:vectoradd-cuda12.5.0

resources:

limits:

nvidia.com/gpu: 1 # requesting 1 GPU

tolerations:

- key: nvidia.com/gpu

operator: Exists

effect: NoScheduleIf everything is working correctly, the container log should include a message containing the message Test PASSED:

With the NVIDIA GPU Operator working out of the box, we are planning to offer a complete end-to-end experience, by enabling the end user to order a Kubernetes cluster via Gardener with everything preset; as a Service. We will be working with the community and propose a Gardener Enhancement Proposal (GEP), with the goal to present the integrated experience as an extension like the one shown below.

kind: Shoot

...

spec:

extensions:

- type: nvidia-gpu-extension

providerConfig:

cdi:

enabled: true

default: true

toolkit:

installDir: /opt/nvidia

driver:

imagePullPolicy: Always

usePrecompiled: true

repository: ghcr.io/gardenlinux/gardenlinux-nvidia-installer

...Watch our 5 minutes demo and see how it works end-to-end!

Our Apeiro community encourages you to share feedback or report any issues you encounter while using the NVIDIA GPU Operator on Garden Linux. Please open an issue in the gardenlinux-nvidia-installer repository.

The team values your contributions and is eager to hear from your experience.

Thanks to Jathavan Sriram from NVIDIA for the productive discussions. ↩︎